Running k0s with Private Worker Nodes (Ingress on Control Plane, Workloads on Workers)

DevOps & Cloud Engineer — building scalable, automated, and intelligent systems. Developer of sorts | Automator | Innovator

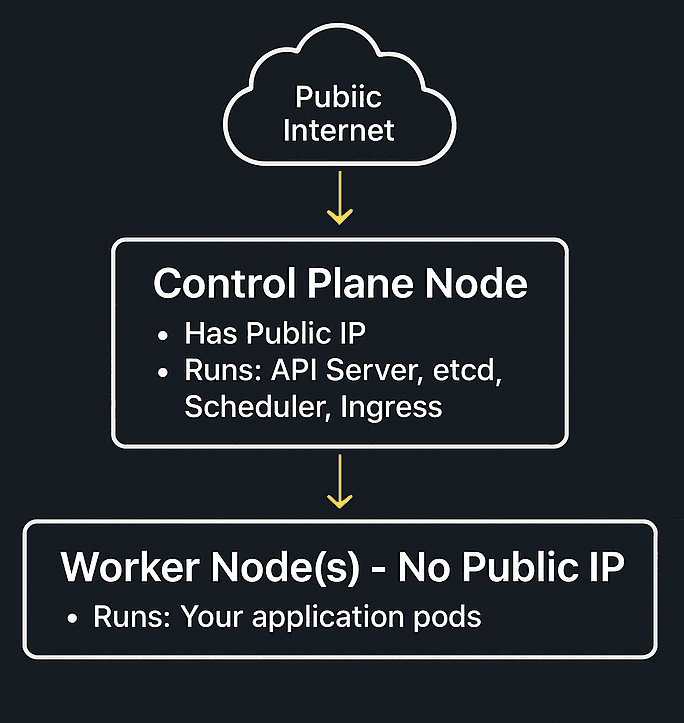

Whenever using AKS, EKS, k3s, or even full Kubernetes before, you have probably seen clusters where every node gets a public IP. That works, but it’s not ideal, especially if you care about security or you are running a homelab / small cloud setup.

Recently, I experimented with k0s, a lightweight Kubernetes distribution. During the setup, I wanted something specific:

Control Plane node: Public IP, runs API Server + Ingress

Worker nodes: No public IP: completely private

Applications run only on workers

Everything still reachable through Ingress

It turns out: k0s makes this surprisingly clean.

What is k0s, and how is it different from k3s?

For k3s, you know the model:

k3s server= control plane + worker components bundledk3s agent= worker node

Everything is tightly packaged. Convenient, but sometimes too tightly.

k0s works differently:

| Thing | k0s | k3s |

| Control Plane | Runs only on controller nodes | Runs on server nodes (server = controller + worker unless told otherwise) |

| Worker Nodes | Run only workload components | agent nodes run workloads |

| etcd | Embedded or external | Also embedded by default |

| Network | kube-router or calico | flannel by default |

| Binary | Single k0s binary | Single k3s binary but with patched components |

The key difference:

With k0s, the control plane is cleanly separated.

Controller nodes do not run pods unless you explicitly tell them to.

This is perfect for a setup where the control plane is accessible and worker nodes stay private.

Goal Setup

The worker nodes only need to be able to reach the controller’s private IP on port 6443.

No public exposure at all.

Installing the Controller Node

SSH into the controller VM:

curl -sSLf https://get.k0s.sh | sudo sh

sudo k0s install controller

sudo systemctl start k0scontroller

Get kubeconfig so you can run kubectl:

sudo k0s kubeconfig admin > kubeconfig

export KUBECONFIG=$(pwd)/kubeconfig

Generate a join token for workers:

sudo k0s token create --role=worker > mytoken

Adding a Worker Node (Before Removing Public IP)

SSH into the worker node:

curl -sSLf https://get.k0s.sh | sudo sh

sudo k0s install worker --token-file mytoken (We copy the token from controller to here)

sudo systemctl start k0sworker

Check status from controller:

k0s kubectl get nodes

If you see the worker as Ready, you are set!

Now, Remove the Worker Node Public IP

Because the worker already knows how to reach the control plane, it doesn’t need to be publicly reachable.

Remove public IP (depends on your cloud/hypervisor).

As long as private-link connectivity stays, Kubernetes continues working.

Why does this still work?

Because:

Pods talk inside a cluster network (CNI)

Ingress runs at the control plane

Traffic enters the cluster through the control plane, not worker nodes

Traffic Flow

Worker node never needs to accept any public connections.

Nice and clean.

We can test it out by deploying a sample application, which we can talk about in a different article!

Why This Setup Is Useful

| Benefit | Why it matters |

| Better security | Worker nodes are never exposed |

| Lower cloud cost | Fewer public IPs |

| Predictable traffic path | Ingress is the single entry point |

| Production-like architecture | This is how real clusters are designed |

Even cloud-managed Kubernetes hides workers behind private networking, and here we are learning how it is done!

Final Thoughts

I have used k3s a lot, but k0s seems like the next big thing in small package!

The control plane feels like a "brain" again, instead of everything being bundled into one binary running everywhere.

If your goal is:

A secure small cluster

Proper control/data plane separation

Workers without public exposure

k0s is a great fit.